前述:

這篇文檔是建立在三臺虛擬機相互ping通,防火墻關閉,hosts文件修改,SSH 免密碼登錄,主機名修改等的基礎上開始的。

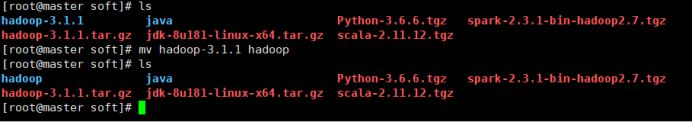

一.傳入文件

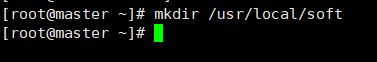

1.創建安裝目錄

mkdir /usr/local/soft

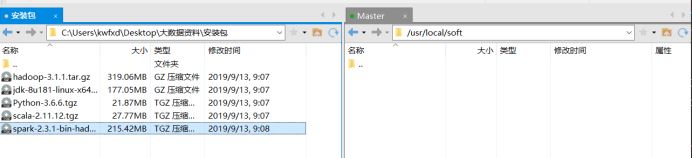

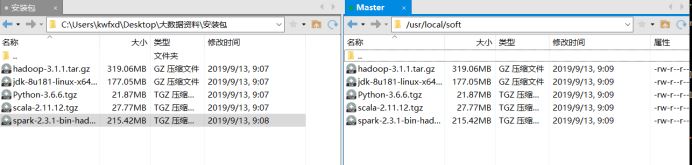

2.打開xftp,找到對應目錄,將所需安裝包傳入進去

查看安裝包:cd /usr/local/soft

二.安裝JAVA

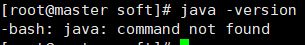

1.查看是否已安裝jdk: java -version

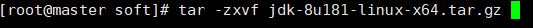

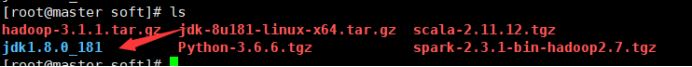

2.未安裝,解壓java安裝包: tar -zxvf jdk-8u181-linux-x64.tar.gz

(每個人安裝包可能不一樣,自己參考)

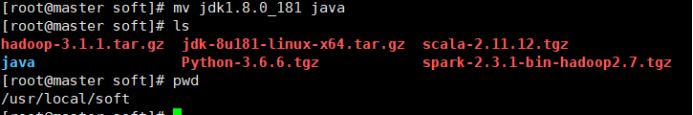

3.給jdk重命名,并查看當前位置:mv jdk1.8.0_181 java

4.配置jdk環境:vim /etc/profile.d/jdk.sh

export JAVA_HOME=/usr/local/soft/java

export PATH=$PATH:$JAVA_HOME/bin

export CLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/rt.jar

5.更新環境變量并檢驗:source /etc/profile

三.安裝Hadoop

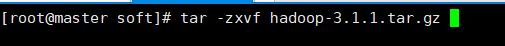

1.解壓hadoop安裝包:tar -zxvf hadoop-3.1.1.tar.gz

2.查看并重命名:mv hadoop-3.1.1 hadoop

3.配置 hadoop 配置文件

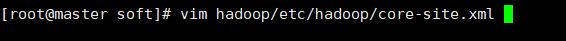

3.1修改 core-site.xml 配置文件:vim hadoop/etc/hadoop/core-site.xml

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/soft/hadoop/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.trash.interval</name>

<value>1440</value>

</property>

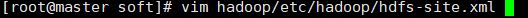

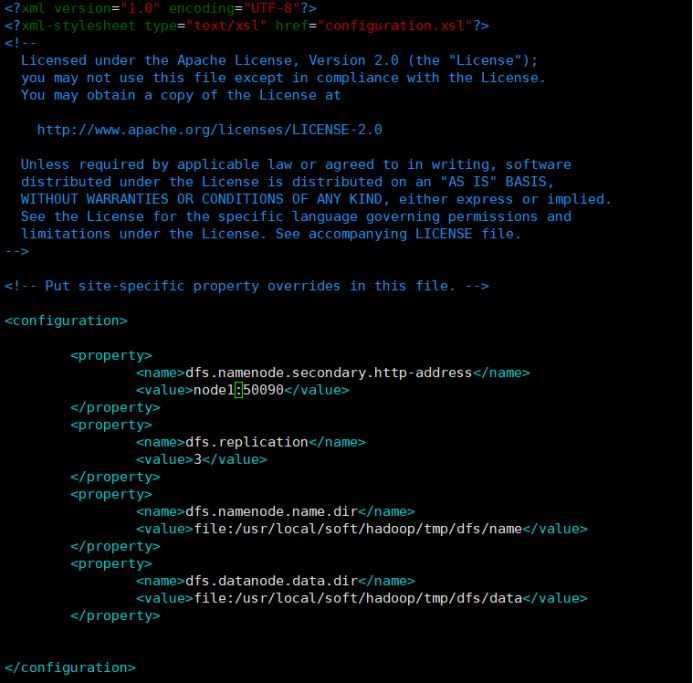

3.2修改 hdfs-site.xml 配置文件:vim hadoop/etc/hadoop/hdfs-site.xml

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>node1:50090</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/soft/hadoop/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/soft/hadoop/tmp/dfs/data</value>

</property>

3.3修改 workers 配置文件:vim hadoop/etc/hadoop/workers

3.4修改hadoop-env.sh文件:vim hadoop/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/local/soft/java

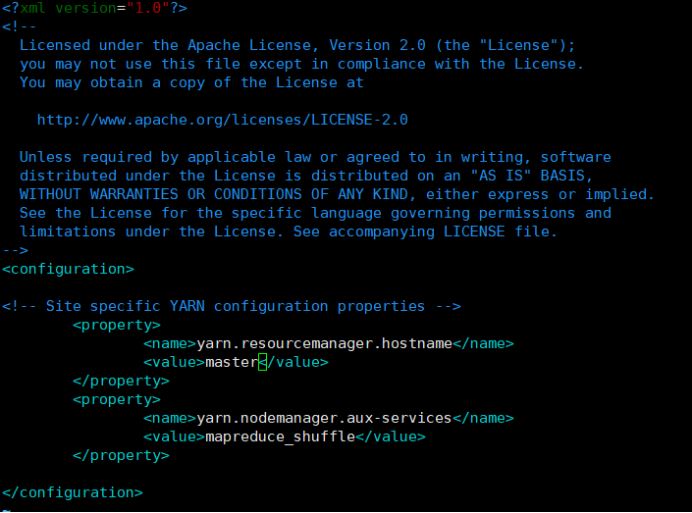

3.5修改yarn-site.xml文件:vim hadoop/etc/hadoop/yarn-site.xml

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

3.6更新配置文件:source hadoop/etc/hadoop/hadoop-env.sh

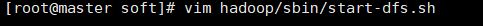

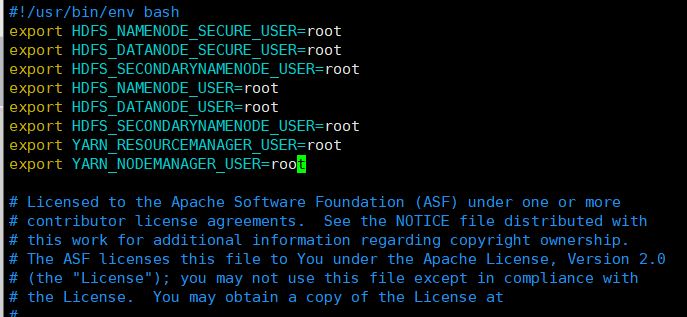

3.7修改 start-dfs.sh配置文件: im hadoop/sbin/start-dfs.sh

export HDFS_NAMENODE_SECURE_USER=root

export HDFS_DATANODE_SECURE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

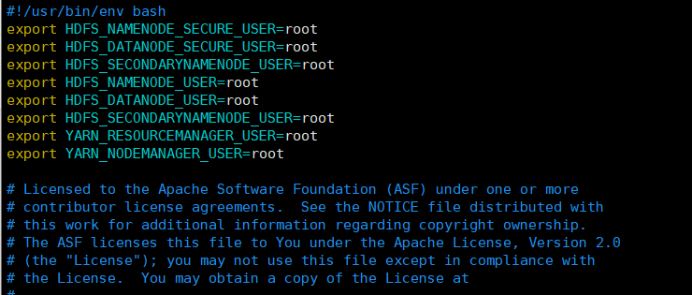

3.8修改 stop-dfs.sh配置文件: vim hadoop/sbin/stop-dfs.sh

export HDFS_NAMENODE_SECURE_USER=root

export HDFS_DATANODE_SECURE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

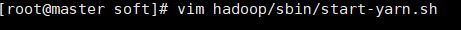

3.9修改 start-yarn.sh配置文件:vim hadoop/sbin/start-yarn.sh

export YARN_RESOURCEMANAGER_USER=root

export HADOOP_SECURE_DN_USER=root

export YARN_NODEMANAGER_USER=root

3.10修改 stop-yarn.sh配置文件:vim hadoop/sbin/stop-yarn.sh

export YARN_RESOURCEMANAGER_USER=root

export HADOOP_SECURE_DN_USER=root

export YARN_NODEMANAGER_USER=root

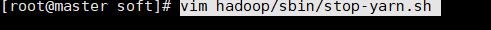

3.11 取消打印警告信息:vim hadoop/etc/hadoop/log4j.properties

log4j.logger.org.apache.hadoop.util.NativeCodeLoader=ERROR

四.同步配置信息:

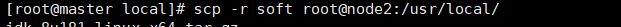

1.同步node1:scp -r soft root@node1:/usr/local/

同步node2:scp -r soft root@node2:/usr/local/

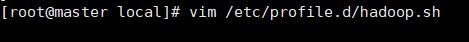

2.等待所有傳輸完成,配置profile文件:vim /etc/profile.d/hadoop.sh

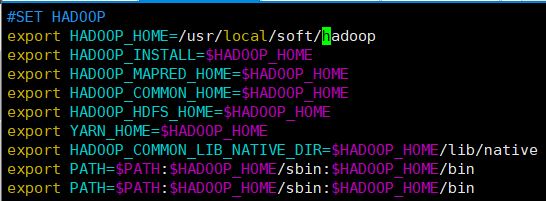

#SET HADOOP

export HADOOP_HOME=/usr/local/soft/hadoop

export HADOOP_INSTALL=$HADOOP_HOME

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export YARN_HOME=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

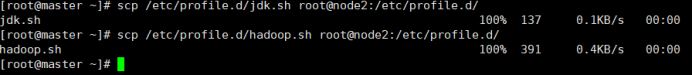

3.繼續傳輸

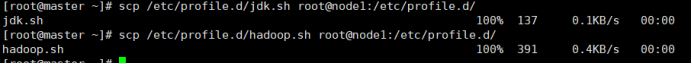

對node1: scp /etc/profile.d/jdk.sh root@node1:/etc/profile.d/

scp /etc/profile.d/hadoop.sh root@node1:/etc/profile.d/

對node2: scp /etc/profile.d/jdk.sh root@node2:/etc/profile.d/

scp /etc/profile.d/hadoop.sh root@node2:/etc/profile.d/

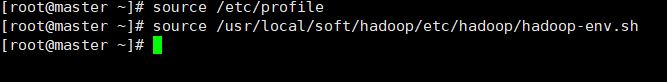

4.在三臺虛擬機上都要執行

source /etc/profile

source /usr/local/soft/hadoop/etc/hadoop/hadoop-env.sh

(只顯示一臺)

5.格式化 HDFS 文件系統:hdfs namenode -format(只在master上)

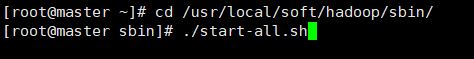

五.啟動集群

cd /usr/local/soft/hadoop/sbin/

./start-all.sh

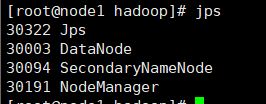

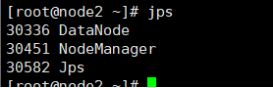

啟動后在三臺虛擬機上分別輸入jps

結果如下:

windows下谷歌瀏覽器檢驗:

http://192.168.204.120:8088/cluster(輸入自己的master的ip地址)

http://192.168.204.120:9870

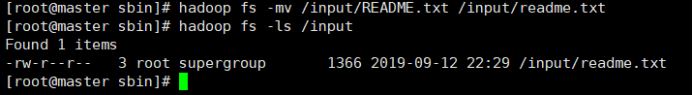

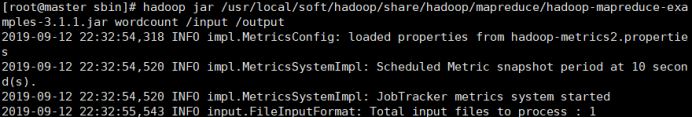

Hadoop測試(MapReduce 執行計算測試):

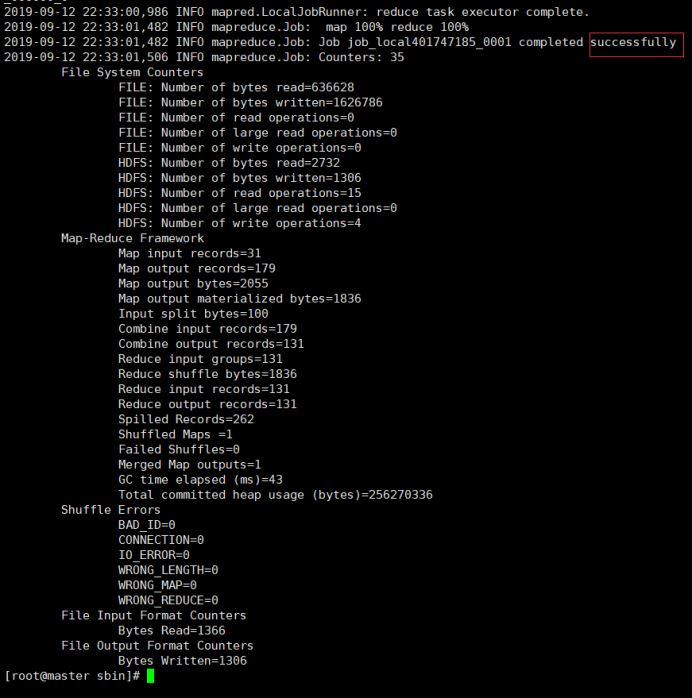

hadoop jar/usr/local/soft/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.1.jar wordcount /input /output

查看運行結果:

以上hadoop配置完成。

總結

以上所述是小編給大家介紹的centos6.8下hadoop3.1.1完全分布式安裝指南,希望對大家有所幫助,如果大家有任何疑問請給我留言,小編會及時回復大家的。在此也非常感謝大家對腳本之家網站的支持!

如果你覺得本文對你有幫助,歡迎轉載,煩請注明出處,謝謝!